This article is half-done without your Comment! *** Please share your thoughts via Comment ***

In this post, I am sharing the use of sp_estimate_data_compression_savings system stored procedure which we can use to check the object size + object expected saving space in SQL Server.

Many times, I have found that people are using CHAR data type for fix length store, but it is also true that people are not storing a correct length of data in CHAR column.

I just gave one example of CHAR column; there might be many reasons where we have to check the table actual size and the size of saving space.

Once we got the actual size and expected saving space, we can apply proper compression algorithm on objects. If your table has multiple indexes, you can compare space of all indexes and can take the decision to which index requires to compress.

We can use sp_estimate_data_compression_savings for the table of a clustered index, non-clustered index and heap table. You can also evaluate object for ROW size and PAGE SIZE.

Check the below small demonstration on this:

Create a sample table:

|

1 2 3 4 5 6 7 |

CREATE TABLE tbl_DumpData ( ID INT ,RandomNumber BIGINT ,CONSTRAINT pk_tbl_DumpData_ID PRIMARY KEY(ID) ) GO |

Insert dummy data:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 |

;WITH CTE AS ( SELECT 1 ID UNION all SELECT ID + 1 FROM CTE WHERE ID + 1 <= 1000000 ) INSERT INTO tbl_DumpData(ID,RandomNumber) SELECT ID ,convert(int, convert (varbinary(4), NEWID(), 1)) AS RandomNumber FROM CTE OPTION (MAXRECURSION 0) GO |

Check the inserted records:

|

1 2 |

SELECT * FROM tbl_DumpData |

Check space for ROW:

|

1 2 3 4 5 6 |

EXEC sp_estimate_data_compression_savings @schema_name = 'dbo', @object_name = 'tbl_DumpData', @index_id = NULL, @partition_number = NULL, @data_compression = 'ROW' |

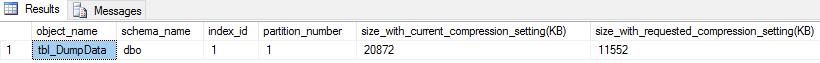

Result: Check the difference between size_with_current_compression_setting and size_with_requested_compression_setting

After data compression, we can make the object size upto size_with_requested_compression_setting.

Check space for PAGE:

|

1 2 3 4 5 6 |

EXEC sp_estimate_data_compression_savings @schema_name = 'dbo', @object_name = 'tbl_DumpData', @index_id = NULL, @partition_number = NULL, @data_compression = 'PAGE' |

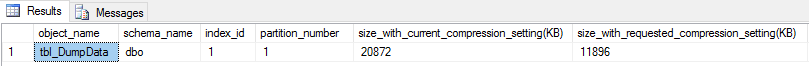

Result: Check the difference between size_with_current_compression_setting and size_with_requested_compression_setting

After data compression, we can make the object size upto size_with_requested_compression_setting.